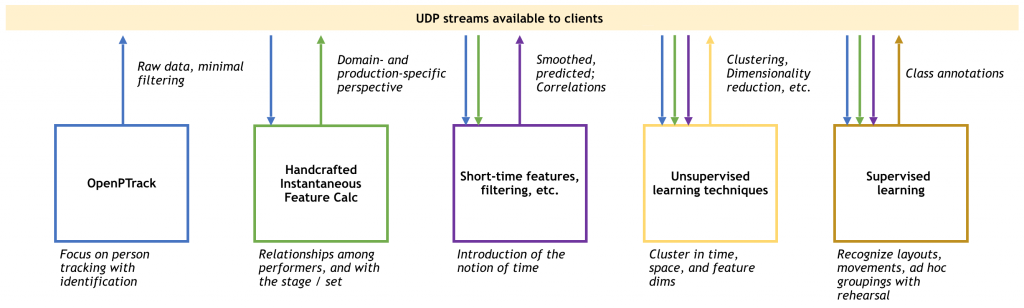

OpenPTrack senses where people are, but not how they move. That’s where OpenMoves comes in. Interpreting human motion data in real time is an important capability for mixed reality applications.

Being developed by UCLA REMAP, OpenMoves is a new toolset for generating higher-level short-time features, as well as learning and recognizing movement trajectories gathered by OpenPTrack—especially for applications in arts, education and culture.

OpenMoves is in early stages of development; more information is on the OpenMoves section of the OpenPTrack wiki.

See also: S. Amin and J. Burke. “OpenMoves: A System for Interpreting Person-Tracking Data.” Proc. of the 5th Intl. Conf. on Movement and Computing. ACM, 2018.