OpenPTrack is an open source project launched in 2013 to create a scalable, multi-camera solution for person tracking.

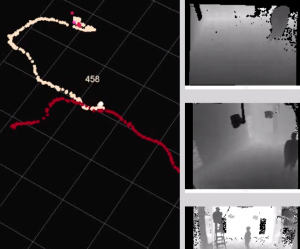

It enables many people to be tracked over large areas in real time.

It is designed for applications in education, arts and culture, as a starting point for exploring group interaction with digital environments.

We believe that interfaces to computers should extend beyond the fingertips, and be for more than just one person. Our objective is to enable “creative coders” to create body-based interfaces for large groups of people—for classrooms, art projects and beyond. With OpenPTrack as an open source building block, our research aims to make the development of meaningful interactions between groups of people and digital environments easier.

Based on the widely used, open source Robot Operating System (ROS), OpenPTrack provides:

- user-friendly camera network calibration;

- person detection from RGB/infrared/depth images;

- efficient multi-person tracking;

- UDP and NDN streaming of tracking data in JSON format.

OpenPTrack V2 “Gnocchi” adds:

- trainable object tracking;

- pose recognition;

- Docker container support for easier deployment.

Read our overview of how OpenPTrack works for more details.

To explore what makes OpenPTrack different from other tracking technologies, see the tracking technology comparison.

With the advent of commercially available consumer depth sensors, and continued efforts in computer vision research to improve multi-modal image and point cloud processing, robust person tracking with the stability and responsiveness necessary to drive interactive applications is now possible at low cost. But the results of the research are not easy to use for application developers.

The creation of OpenPTrack was sparked by the belief that a disruptive project was needed for artists, creators and educators to work with robust real-time person tracking in real-world projects. OpenPTrack strives to support those in the arts, cultural and education sectors who wish to experiment with real-time tracking as an input for their applications. The project contains numerous state-of-the-art algorithms for RGB and/or depth tracking, and has been created on top of a modular node–based architecture, to support the addition and removal of different sensor streams online.